Humans Provide the Context

D-Day shows that your models are only as good as your operators.

Daryl Wieland is Senior Director of Partnerships at Palantir and an Adjunct Professor of Finance at George Mason University.

On the morning of June 6, 1944, General Dwight D. Eisenhower possessed more intelligence data than any military commander in human history. Weather models, tide charts, reconnaissance photographs, intercepted communications, resistance reports, and statistical analyses of German defensive positions flooded his headquarters. Yet when the meteorologists’ models showed a narrow window of acceptable weather, contradicting earlier predictions, Eisenhower faced a decision no algorithm could make for him. He understood something that transcends data: context matters.

OODA Loop

Today’s military planners operate in an environment that would have seemed like science fiction to Eisenhower. America possesses computational power that can simulate thousands of battle scenarios in seconds. Our models evaluate satellite imagery, signals intelligence, open-source data, human intelligence, biometric information, and real-time sensor feeds to generate probability assessments with decimal-point precision. We can model logistics chains, predict maintenance failures, and estimate casualty rates with unprecedented sophistication. These capabilities are transformative in nature but also present a profound danger if we rely on them without context or misunderstand how important “under these conditions” is when using algorithms and models.

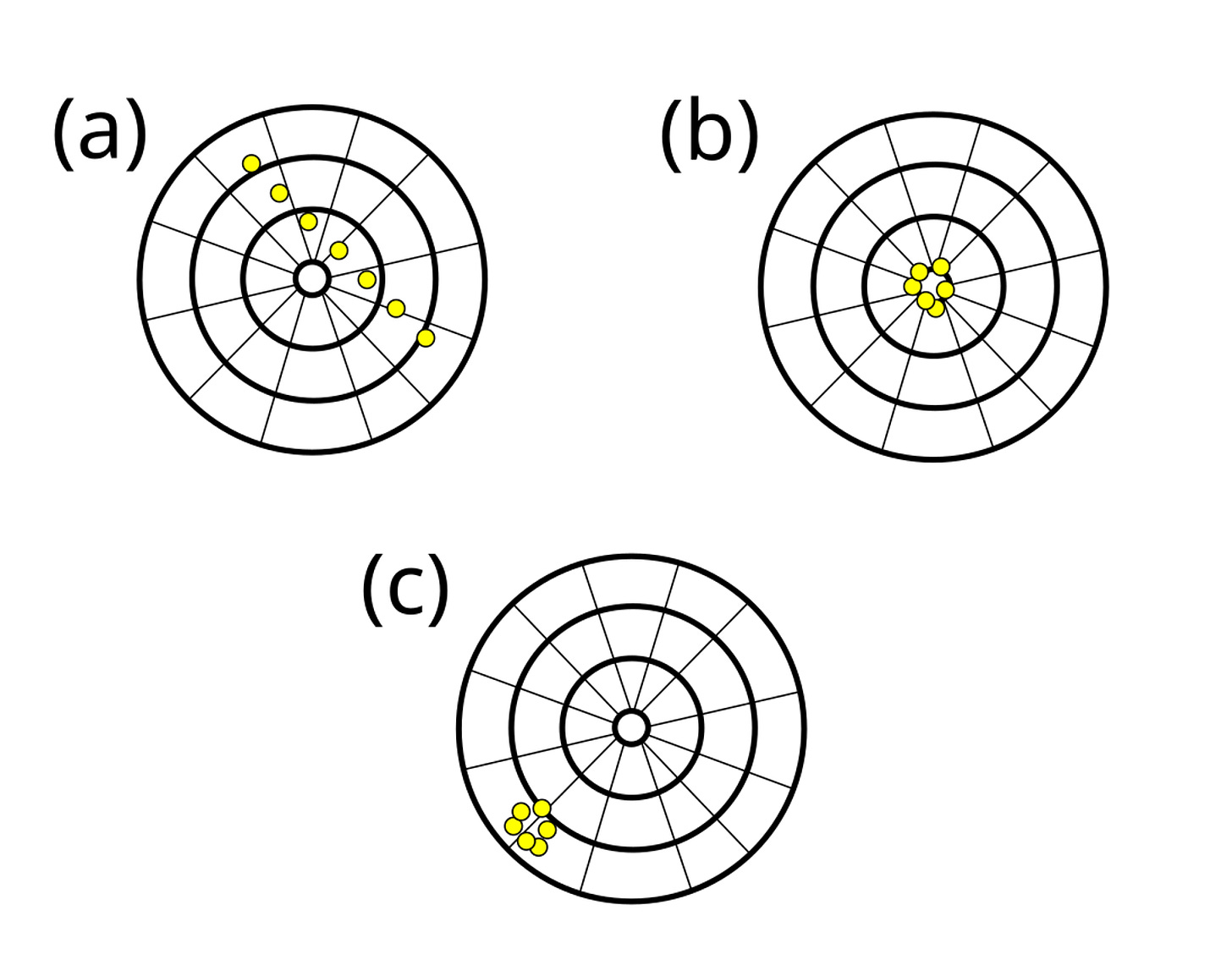

Ultimately, it’s about decision advantage and tempo. It’s about tightening the Observe, Orient, Decide, Act (OODA) loop that Air Force Colonel John Boyd made famous. It’s about the team that can make better decisions faster winning. Models and automation can massively accelerate your decision cycle. That’s real advantage. But speed without accuracy means you’re accelerating toward the wrong outcome. It is like option c in this graphic where precision without accuracy doesn’t help:

The goal is rapid, high-quality decisions. That requires tight human-machine teaming where the human interprets the context.

Human – Machine Teaming

The danger lies not in the models themselves, but in our relationships with them. The failure mode we see repeatedly is treating this as a zero-sum game. Either we trust human judgment (slow, inconsistent, doesn’t scale) or we trust the machines (fast, consistent, scalable). That’s a false dichotomy. The right answer is integrated teaming where humans and machines amplify each other’s strengths and compensate for each other’s weaknesses.

A good example of successful human-machine teaming is how PayPal combines automated machine learning models that assign risk scores and flag suspicious transactions with human fraud analysts who manually review uncertain cases, adjust custom filters, and provide feedback that continuously improves the AI models’ accuracy.

This hybrid approach allows machines to process vast numbers of transactions at scale while human experts catch context-specific fraud patterns that algorithms might miss, such as social engineering schemes, and helps the system adapt to evolving fraud tactics through their domain expertise.

We have become a civilization intoxicated with quantification, mistaking precision for accuracy and correlation for causation. When a model produces an output, particularly one rendered in clean graphics with confidence intervals, it carries an aura of objectivity that human judgment lacks. “According to the data” has become the model equivalent of “thus saith the Lord,” an incantation that supposedly settles arguments and justifies decisions. But data, like scripture, requires interpretation. And interpretation requires context.

Under These Conditions

Every model operates within boundaries, explicit or implicit. Interpreting data “under these conditions” means acknowledging conclusions drawn from data are specific to the exact environment, models, and variables present during collection. In other words, context is king.

A logistics model built on assumptions about fuel consumption in temperate climates will fail catastrophically in Arctic conditions. A pattern-of-life analysis trained on peacetime behavior becomes dangerously unreliable when populations are displaced by combat. A terrain analysis model that excels at predicting trafficability in open desert may prove worthless in dense urban environments where a single blocked intersection reshapes an entire operation. These limitations seem obvious when stated plainly, yet they fade from view when we encounter the seductive authority of the output itself.

The problem compounds as modeling becomes democratized. Where sophisticated analysis once required expensive specialists and rare computational resources, today’s junior officer can run complex simulations on laptops. This accessibility is valuable as it decentralizes analytical capability and speeds decision cycles. But it also means that the human capital required to interpret results responsibly must be scaled proportionally. As we distribute power we need to also distribute insights that lead to wisdom.

Decision Dominance

When I talk to defense contractors and customers about deploying advanced analytics, I remind them of the importance of human-machine teaming and to remember the human in the solution. Train your analysts. Build feedback loops. Create curious and skeptical cultures that question the model and the inputs.

This is about building decision support for warfighters operating in complex and contested environments. The technology is incredible. But it’s in service of human judgment, not a replacement for it. Get that relationship right and the result is decision dominance. Get it wrong and the decision making will suffer.

Military history offers sobering examples of this phenomenon. During the Vietnam War, Secretary of Defense Robert McNamara brought unprecedented analytical rigor to military planning. Body counts, sortie rates, weapons-destroyed ratios, and hamlet evaluation surveys generated mountains of data suggesting progress. The models said we were winning. Yet these metrics existed within a context the data couldn’t capture: a political war being fought as a military one, a population whose loyalty couldn’t be quantified, and an enemy whose will the statistics fundamentally misunderstood. The data wasn’t wrong; it simply couldn’t answer the questions that mattered.

Using Common Sense

Consider a more recent example: predictive targeting models that assess strike options. These tools can calculate probable collateral damage, mission success rates, and follow-on effects with remarkable sophistication. They are invaluable assets. But they cannot tell you whether destroying a particular target at a particular moment advances strategic objectives, how the strike will be perceived regionally, or whether the second-order effects align with your theater commander’s intent. They operate within a narrower context than the decision requires.

This is where the irreplaceable value of human judgment emerges. Common sense, which as Voltaire noted is not so common, provides the critical mission ingredient. It’s the experienced planner who recognizes that a model trained on historical data cannot account for an adversary’s recent doctrinal shift. It’s the intelligence analyst who understands that unusual patterns in communications intercepts might reflect religious holidays rather than operational preparation. It’s the logistician who knows that convoy timelines need padding because troops are exhausted, even if the fuel and route analysis say otherwise.

Healthy Skepticism Required

The responsibility that accompanies our analytical power is to cultivate this judgment systematically. It means training our people to interrogate and understand their model assumptions rather than simply run model outputs. It means encouraging healthy skepticism even toward—especially toward—results that confirm our existing beliefs. It means creating organization cultures where questioning the data is considered prudent rather than obstructive.

We must teach our warfighters to ask essential questions: What assumptions underpin this model? What data fed these algorithms? What range of conditions was this tool designed for? What factors exist in my operational environment that the model cannot capture? When the model’s assessment contradicts my observations, which deserves greater weight?

The goal is not to reject analytical tools but rather to employ them properly. Models are force multipliers for human judgment, not replacements for it. They narrow uncertainty, reveal patterns, and free cognitive resources for higher-order thinking. But they remain tools, and tools require skilled operators who understand both their capabilities and limitations.

Ultimately It Was the General’s Decision

General Eisenhower wrote two key messages before D-Day. The first was his inspiring “Order of the Day” to the troops on June 6, 1944, rallying them for the “Great Crusade” with confidence in victory. The second was a secret “failure message” taking sole blame if the landings failed, emphasizing bravery and devotion to duty.

The “Order of the Day” boosted morale, acknowledging the tough fight ahead but highlighting Allied strength, while the failure message underscored his immense responsibility, a testament to his leadership under extreme pressure.

Eisenhower ultimately gave the “go-ahead” and launched the massive D-Day invasion based on the weather model’s narrow window, but he made that choice understanding the broader context: strategic timing, alliance politics, troop morale, and operational alternatives. The data informed his decision; it didn’t make it. That distinction, preserved through decades of technological advancement, remains as critical today as it was on that gray morning in Portsmouth.

The machines can calculate. Only humans can command.